Market research teams rely on qualitative data, like open-ended survey responses, reviews, and comments, because it captures how people actually feel in their own words. Those emotions behind the words are what sentiment analysis looks for.

Sentiment analysis is the process of detecting both what people are talking about (the topics) and how they feel about it (positive, negative, or neutral sentiment). When done well, sentiment analysis helps uncover patterns in how customers feel, not just what they say.

While it can be incredibly insightful, it’s not always clear how to use sentiment analysis in practice. Many teams struggle with practical sentiment analysis use cases and end up with questions like: when should sentiment analysis be used, and what does it actually help you do?

In this article, we explain where sentiment analysis fits within the market research workflow and highlight practical sentiment analysis use cases that show how teams apply it to answer specific research questions and surface meaningful patterns in qualitative data.

What Is Sentiment Analysis?

Sentiment analysis is the process of examining written feedback to understand both what people are talking about and how they feel about it. In market research, it’s commonly applied to survey responses, reviews, customer feedback, social media comments, and campaign responses.

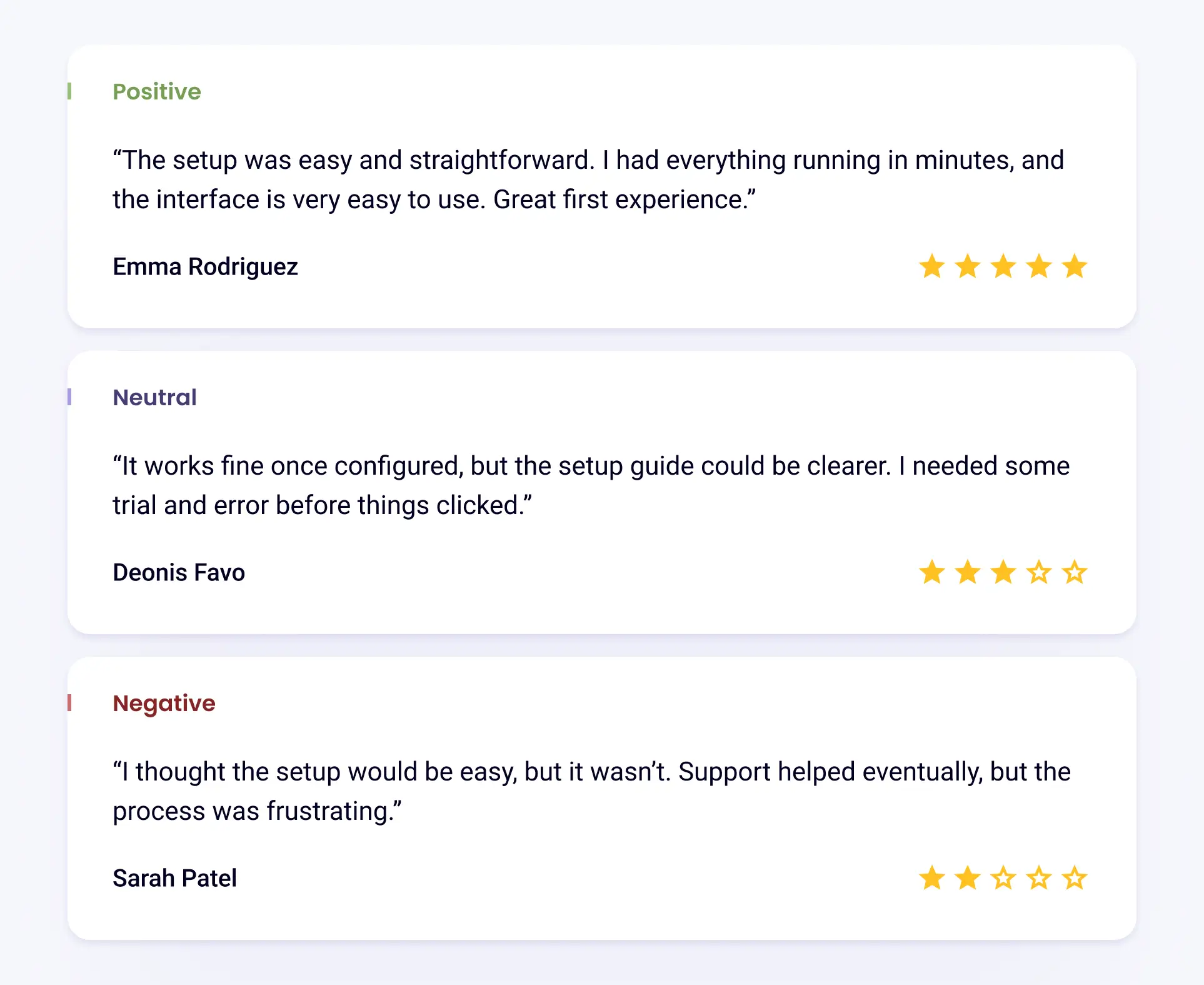

For example, consider two responses to the same survey question:

- “The setup was easy.”

- “I thought the setup would be easy, but it wasn’t.”

Even though both responses mention the same idea, sentiment analysis would flag them differently. The first reflects a positive experience, while the second signals disappointment. That distinction helps researchers see not just what topics are being discussed, but whether people are reacting well or poorly to them.

Benefits of Sentiment Analysis for Market Research Teams

Many research teams often face the same challenge: they have plenty of feedback, but no clear way to decide what deserves attention first.

Imagine reviewing hundreds of open-ended survey responses after a product launch. Customers mention pricing, onboarding, features, and support. All of it feels important. Without a way to understand emotional intensity, everything looks equally urgent.

Sentiment analysis adds that missing layer. It helps researchers see which topics people care about emotionally, not just which topics appear most often.

With that context in mind, here’s what sentiment analysis makes possible in practice:

- Identify risks earlier: When negative or frustrated sentiment starts increasing around a specific theme, research teams can flag potential issues before they show up as churn, complaints, or declining adoption.

- Support clearer product and messaging decisions: By analyzing how people react emotionally to features, claims, or positioning, researchers can explain why certain ideas resonate and why others create confusion or resistance.

- Add depth to audience and segment analysis: Layering sentiment on top of demographics, roles, or usage patterns reveals how different groups experience the same product in very different ways.

- Strengthen insight storytelling for stakeholders: Instead of reporting that “customers mentioned X,” researchers can show whether customers are calm, confused, excited, or frustrated, which makes insights easier for non-research teams to understand and act on.

Understand open-ended feedback more clearly with sentiment-driven insights

7 Use Cases for Sentiment Analysis

Let’s use a scenario to illustrate the use cases of sentiment analysis. Imagine a music production company runs an online platform where independent artists upload tracks, collaborate, and license music for film and ads. The company collects feedback through surveys, support tickets, reviews, and social media comments.

Each use case below shows how the research team uses sentiment to decide what matters most.

1. Understand What Customers Really Want

Using sentiment analysis helps research teams understand not just what customers mention, but how strongly they feel about those topics. It shows whether feedback reflects mild suggestions, confusion, or serious frustration, which is critical when deciding what deserves immediate attention.

Example:

Artists mention “export settings” in feedback. The topic doesn’t dominate surveys, so it seems low priority.

Sentiment analysis shows something different. Mentions of export settings carry strong frustration. Artists describe losing hours of work or missing deadlines. The emotional tone is intense, not casual.

Even though the problem has only been mentioned a few times, the research team flags the issue before it becomes a churn driver.

2. Measure Emotional Reaction to Campaigns

Sentiment analysis helps research teams evaluate how audiences emotionally react to marketing messages. This is especially important for paid campaigns, where early emotional signals can indicate whether or not a message is building trust.

Example:

The music production company launches a paid campaign promoting “studio-quality sound in minutes.” The campaign is expensive and time-sensitive.

Before scaling spend, the research team reviews sentiment from early feedback. Artists respond positively to the sound quality claim but negatively to the phrase “in minutes,” which triggers skepticism.

Midway through the campaign, sentiment analysis of comments and replies shows rising frustration around setup time. The marketing team adjusts messaging and releases a short walkthrough video while the campaign is still running, not after it ends.

Sentiment analysis here protects budget and brand credibility at the same time.

3. Monitor Brand Perception Over Time

Tracking sentiment over time helps researchers see how brand perception is changing beneath the surface. Shifts in emotional tone can reveal growing dissatisfaction or declining trust before it becomes obvious through complaints.

Example:

The number of brand mentions of the production company stays steady across forums and social media. Nothing looks wrong on the surface. Sentiment trends, however, show a slow rise in negative emotion around reliability and performance. No single comment is dramatic, but the direction is clear.

The research team raises this early signal to product leaders, who investigate infrastructure issues before reputation damage becomes visible.

4. Evaluate Reactions to Product Features or Updates

Sentiment analysis helps research teams understand how people feel about product changes, which makes it easier to distinguish between feature problems and issues related to onboarding, expectations, or learning curves.

Example:

The company releases a collaboration feature allowing artists to co-edit tracks.

Sentiment analysis highlights that experienced producers respond positively, while new users express confusion and anxiety. The feature itself works, but the emotional response reveals an onboarding problem, not a product flaw.

That distinction prevents the team from rolling back a valuable feature and instead focuses effort on education about how to properly use the feature, even as a new user.

5. Identify Friction Across the Customer Journey

By applying sentiment analysis across multiple feedback sources, research teams can pinpoint which stages of the experience are creating friction.

Example:

Sentiment analysis across feedback sources reveals where frustration clusters along the journey. For the music production company:

- Onboarding feedback is mostly positive

- Support interactions skew negative, with comments describing replies as “generic” or “slow”

This pattern tells researchers the product isn’t the problem. Instead, the support experience is. Without sentiment, both areas might appear equally fine.

6. Compare Sentiment Across Competitors

Emotional patterns in competitor feedback often reveal strengths and weaknesses that feature comparisons miss.

Example:

When analyzing reviews and open-ended feedback about competing music production platforms, the research team notices a clear emotional pattern. Artists using competitor tools frequently express frustration around licensing limitations, such as restrictive terms and difficulty monetizing work.

In contrast, sentiment around this company’s licensing model is consistently positive. Artists describe feeling “free,” “protected,” and “confident” when sharing or selling their music.

This difference doesn’t show up as clearly in feature lists or pricing comparisons. On paper, the platforms look similar. Sentiment reveals how artists feel about using them.

The research team shares this insight with marketing, which adjusts positioning to focus on creative freedom and ownership instead of technical features or price points.

7. Use Social Listening to Capture Unprompted Feedback

Sentiment analysis helps researchers learn from feedback that customers share voluntarily, without being prompted by surveys or forms. These unfiltered conversations often carry stronger emotion than survey responses.

Example:

Not all artists respond to surveys. Some prefer to share opinions publicly, such as on forums, in community groups, or in social media threads on Reddit.

When the research team analyzes sentiment in these unprompted spaces, a recurring issue appears: frustration around collaboration delays. Artists complain about waiting on shared files and version conflicts.

Interestingly, this issue rarely appears when artists are directly asked about collaboration in surveys. The structured format doesn’t trigger the same honesty as public discussion.

Unprompted feedback often surfaces problems people don’t think to mention when asked directly. Sentiment analysis helps researchers capture these blind spots and bring real-world frustrations into the research process.

Unlock the power of AI sentiment analysis in minutes

Challenges of Sentiment Analysis in Market Research Data

The use cases above show how sentiment analysis helps research teams make sense of large volumes of qualitative feedback. But applying sentiment analysis in real research isn’t always easy. Language is messy, people express emotions indirectly, and context matters more than most tools expect.

Understanding these challenges and how teams typically address them is critical to using sentiment analysis responsibly and effectively.

Sarcasm and Subtle Language

One of the most common challenges is sarcasm. Feedback can sound positive on the surface while clearly expressing frustration. For example, an artist might write, “Love losing my work five minutes before a deadline.”

A basic sentiment approach could misclassify this as positive because of the word “love,” even though the intent is negative.

Traditional keyword-based sentiment analysis tools and methods often fail in these cases because they rely on predefined word lists or scoring systems. In contrast, this is where generative AI-powered sentiment analysis tools like Blix really shine, understanding nuance, sarcasm, and context the way a human would.

Mixed Emotions in a Single Response

Many real responses include both praise and criticism. An example could be a comment like, “The sound quality is amazing, but the setup process is frustrating.” If this gets flattened into a single “neutral” label, important insight is lost.

To handle this, market research teams often:

- Break responses down by topic or theme before looking at sentiment

- Use mixed sentiment as a signal that tradeoffs exist, not that feedback is unclear

This allows researchers to say, “People like this part but struggle with that part,” which is far more actionable than a single overall score.

Variations in Phrasing

People can describe problems using very different wording. Some respondents are direct and emotional, while others use softer or more technical language to express the same frustration. For example, someone may write, “This is painfully slow,” while another may write, “The process feels longer than expected.”

On its own, the second comment sounds neutral or mild. But when these phrases appear repeatedly alongside support requests, they signal dissatisfaction just as clearly as more emotional language.

To account for differences in how people phrase feedback, research teams should:

- Analyze sentiment alongside themes, not in isolation

- Compare sentiment trends over time rather than relying on one-off scores

These challenges highlight why sentiment analysis can’t be treated as a simple label applied to text. Knowing that feedback is positive or negative only becomes useful when that emotion is tied to specific topics, features, or stages of the experience.

AI powered sentiment analysis tools designed for qualitative analysis at scale can help research teams organize open-ended feedback into themes, making it easier to see where frustration, satisfaction, or uncertainty is concentrated and decide what to investigate next.

Quick & Easy Sentiment Analysis with Blix

Blix helps market research teams make sense of open-ended feedback by combining sentiment analysis, with automatic theme detection and coding, powered by generative AI.

Unlike keyword-based tools, Blix understands the full context of each response, capturing nuance, sarcasm, and emotional tone with human-like accuracy.

This makes it easy to identify where frustration, satisfaction, or confusion is coming from, and what’s driving it, so you can deliver better research, faster.